A-REX data transfer framework (DTR) technical description

This page describes the data staging framework for ARC, code-named DTR (Data Transfer Reloaded).

Overview

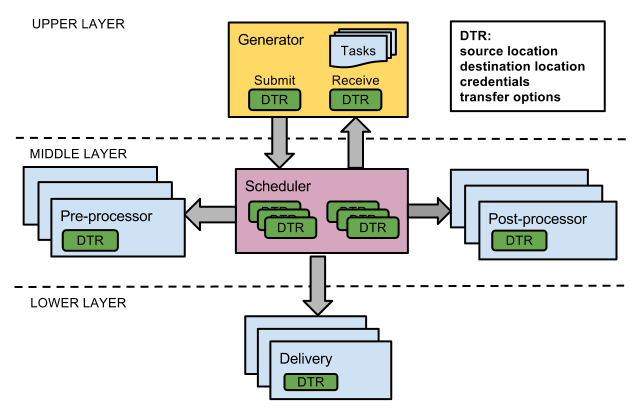

ARC’s Computing Element (A-REX) performs the task of data transfer for jobs before and after the jobs run. The requirements and the design steps for the data staging framework are described in DTR Design and Implementation Details. The framework is called DTR (Data Transfer Reloaded) and uses a three-layer architecture, shown in the figure below:

Fig. 11 DTR three-layer architecture

The Generator uses user input of tasks to construct a Data Transfer Request (also DTR) per file that needs to be transferred. These DTRs are sent to the Scheduler for processing. The Scheduler sends DTRs to the Pre-processor for anything that needs to be done up until the physical transfer takes place (e.g. cache check, resolve replicas) and then to Delivery for the transfer itself. Once the transfer has finished the Post-processor handles any post-transfer operations (e.g. register replicas, release requests). The number of slots available for each component is limited, so the Scheduler controls queues and decides when to allocate slots to specific DTRs, based on the prioritisation algorithm implemented. See DTR priority and shares system for more information.

This layered architecture allows any implementation of a particular component to be easily substituted for another, for example a GUI with which users can enter DTRs (Generator) or an external point-to-point file transfer service (Delivery).

Implementation

The middle and lower layers of the architecture (Scheduler, Processor

and Delivery) are implemented as a separate library

libarcdatastaging (in src/libs/data-staging in the ARC source tree).

This library is included in the nordugrid-arc common libraries

package. It depends on some other common ARC libraries and the DMC

modules (which enable various data access protocols and are included in

nordugrid-arc-plugins-* packages) but is independent of other

components such as A-REX or ARC clients. A simple Generator is included

in this library for testing purposes. A Generator for A-REX is

implemented in

src/services/a-rex/grid-manager/jobs/DTRGenerator.(h|cpp), which

turns job descriptions into data transfer requests.

Configuration

Data staging is configured through the [arex/data-staging] block in arc.conf.

Reasonable default values exist for all parameters but the

[arex/data-staging] block can be used to tune the parameters,

and also enable multi-host data staging. A selection of

parameters are shown below:

Parameter |

Explanation |

Default Value |

|---|---|---|

maxdelivery |

Maximum delivery slots |

10 |

maxprocessor |

Maximum processor slots per state |

10 |

maxemergency |

Maximum emergency slots for delivery and processor |

1 |

maxprepared |

Maximum prepared files (for example pinned files using SRM) |

200 |

sharetype |

Transfer share scheme (dn, voms:vo, voms:group or voms:role) |

None |

definedshare |

Defined share and priority |

_default 50 |

Multi-host related parameters |

||

deliveryservice |

URL of remote host which can perform data delivery |

None |

localdelivery |

Whether local delivery should also be done |

no |

remotesizelimit |

File size limit (in bytes) below which local transfer is always used |

0 |

usehostcert |

Whether the host certificate should be used in communication with remote delivery services instead of the user’s proxy |

no |

Description of other data-staging paramters can be found in [arex/data-staging] block. The multi-host parameters are explained in more detail in ARC Data Delivery Service Technical Description

Example:

[data-staging]

maxdelivery = 10

maxprocessor = 20

maxemergency = 2

maxprepared = 50

sharetype = voms:role

definedshare = myvo:production 80

deliveryservice = https://spare.host:60003/datadeliveryservice

localdelivery yes

remotesizelimit = 1000000

Client-side priorities

To specify the priority of jobs on the client side, the priority

element can be added to an XRSL job description, eg:

("priority" = "80")

For a full explanation of how priorities work see DTR priority and shares system.

gm-jobs -s

The command “gm-jobs -s” to show transfer shares information now shows the same information at the per-file level rather than per-job. The number in “Preparing” are the number of DTRs in TRANSFERRING state, i.e. doing physical transfer. Other DTR states count towards the “Pending” files. For example:

Preparing/Pending files Transfer share

2/86 atlas:null-download

3/32 atlas:production-download

As before, per-job logging information is in the controldir/job.id.errors files,

but A-REX can also be configured to log all DTR messages to a central log file

in addition through the logfile parameter.

Using DTR in third-party applications

ARC SDK Documentation gives examples on how to integrate DTR in third-party applications.

Supported Protocols

The following access and transfer protocols are supported. Note that third-party transfer is not supported.

file

HTTP(s/g)

GridFTP

SRM

Xrootd

LDAP

Rucio

S3

ACIX

RFIO/DCAP/LFC (through GFAL2 plugins)

Multi-host Data Staging

To increase overall bandwidth, multiple hosts can be used to perform the physical transfers. See ARC Data Delivery Service Technical Description for details.

Monitoring

In A-REX the state, priority and share of all DTRs is logged to the file controldir/dtr.state periodically (every second). This can then used by the Gangliarc framework to show data staging information as ganglia metrics.

Advantages

DTR offers many advantages over the previous system, including:

- High performance

When a transfer finishes in Delivery, there is always another prepared and ready, so the network is always fully used. A file stuck in a pre-processing step does not block others preparing or affect any physical transfers running or queued. Cached files are processed instantly rather than waiting behind those needing transferred. Bulk calls are implemented for some operations of indexing catalogs and SRM protocols.

- Fast

All state is held in memory, which enables extremely fast queue processing. The system knows which files are writing to cache and so does not need to constantly poll the file system for lock files.

- Clean

When a DTR is cancelled mid-transfer, the destination file is deleted and all resources such as SRM pins and cache locks are cleaned up before returning the DTR to the Generator. On A-REX shutdown all DTRs can be cleanly cancelled in this way.

- Fault tolerance

The state of the system is frequently dumped to a file, so in the event of crash or power cut, this file can be read to recover the state of ongoing transfers. Transfers stopped mid-way are automatically restarted after cleaning up the half-finished attempt.

- Intelligence

Error handling has vastly improved so that temporary errors caused by network glitches, timeouts, busy remote services etc are retried transparently.

- Prioritisation

Both the server admins and users have control over which data transfers have which priority.

- Monitoring

Admins can see at a glance the state of the system and using a standard framework like Ganglia means admins can monitor ARC in the same way as the rest of their system.

- Scaleable

An arbitrary number of extra hosts can be easily added to the system to scale up the bandwidth available. The system has been tested with up to tens of thousands of concurrent DTRs.

- Configurable

The system can run with no configuration changes, or many detailed options can be tweaked.

- Generic flexible framework

The framework is not specific to ARC’s Computing Element (A-REX) and can be used by any generic data transfer application.

Open Issues

Provide a way for the infosys to obtain DTR status information

First basic implementation: when DTR changes state write current state to .input or .output file

Decide whether or not to cancel all DTRs in a job when one fails

Current logic: if downloading, cancel all DTRs in job, if uploading don’t cancel any

Should be configurable by user - also EMI execution service interface allows specifying per-file what to do in case of error

Priorities: more sophisticated algorithms for handling priorities

Advanced features such as pausing and resuming transfers